由于某些场合需要把模型转换到其他Deep平台,因此需要转换为ONNX来传递,当别人发来ONNX,我们也需要转换成自己的格式,但是后者会丢meta,所以其实不多.

Tensorflow -> ONNX:

第一步先转换成SavedModel:

model = tf.keras.models.load_model('outputs/model.h5')

tf.keras.models.save_model(model, 'outputs/saved_model')第二步执行转换,根据实际定义算子集.

python -m tf2onnx.convert --saved-model saved_model --opset 10 --output model.onnx得到输出结果:

2022-04-29 04:53:05,376 - INFO - Using tensorflow=2.8.0, onnx=1.11.0, tf2onnx=1.9.3/1190aa

2022-04-29 04:53:05,376 - INFO - Using opset <onnx, 10>

2022-04-29 04:53:19,879 - INFO - Computed 0 values for constant folding

2022-04-29 04:53:28,631 - INFO - Optimizing ONNX model

2022-04-29 04:53:28,990 - INFO - After optimization: Cast -1 (1->0), Identity -6 (6->0), Transpose -34 (36->2)

2022-04-29 04:53:29,168 - INFO -

2022-04-29 04:53:29,168 - INFO - Successfully converted TensorFlow model saved_model to ONNX

2022-04-29 04:53:29,168 - INFO - Model inputs: ['vgg16_input']

2022-04-29 04:53:29,168 - INFO - Model outputs: ['dense_1']

2022-04-29 04:53:29,168 - INFO - ONNX model is saved at model.onnx

随意找了个其他验证工具测试一下.

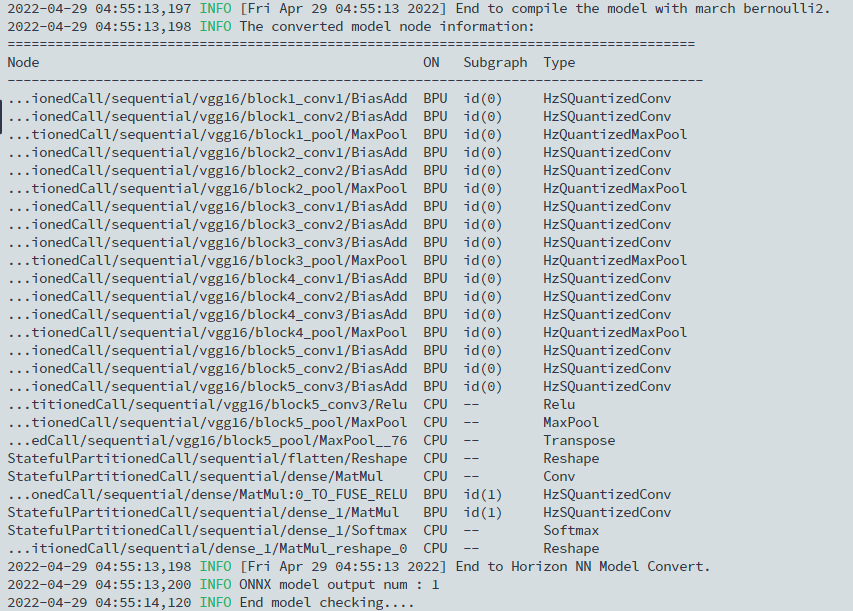

hb_mapper checker --model-type onnx --model model.onnx --march bernoulli2 --input-shape vgg16_input 1x192x192x3